To assess the inter-observer agreement of standard joint count between experienced Rheumatology professor (Prof) and young Rheumatology fellow (candidate), and to compare disease global assessment between professor, young candidate and patients.

MethodsThis study included one hundred rheumatoid arthritis patients. For all patients independent clinical evaluation was done by two rheumatologists (professor and candidate) for detection of tenderness in 28 joints and swelling in 26 joints. The study also involved global assessment of disease activity by the provider (Prof and candidate) (EGA) as well as by the patient (PGA). The EGA was determined without previous knowledge of the patient's laboratory test results.

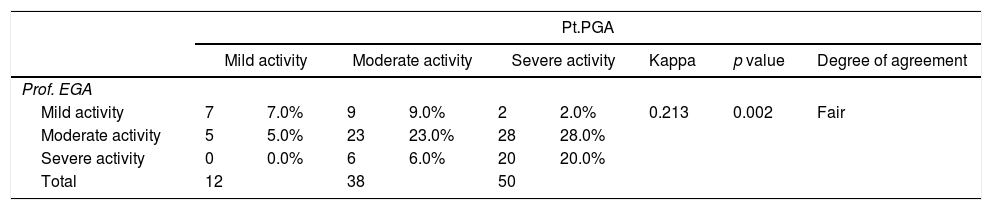

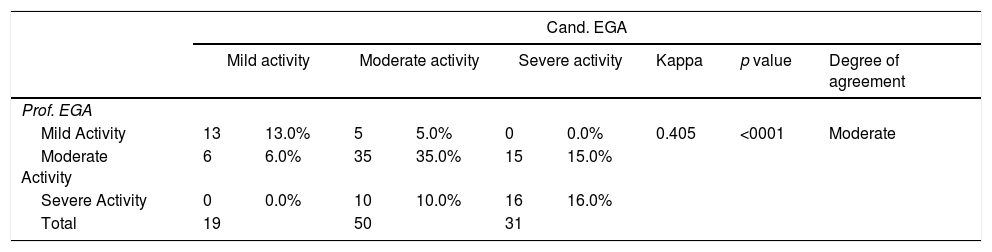

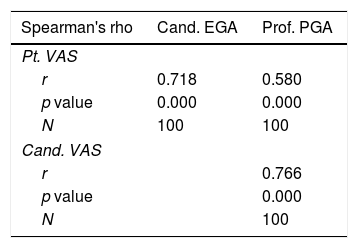

ResultsA highly significant accordance (correlation) between professor and candidate was found in both the number of tender joints (p<0.001) (r=0.946), and the number of swollen joints (p<0.001) (r=0.797). Regarding swollen joints, the highest agreement was in right knee (0.929), while poor agreement was found in the right 5th MCP (0.049). Regarding tender joints, the highest analogy was in the right elbow (0.899), in contrast to the left 3rd PIP (0.462) which showed the least congruence. Agreement study using kappa measurement for disease global assessment showed: moderate agreement (between professor and candidate) (0.405), fair agreement between (professor and patient) (0.213), fair agreement between (candidate and patient) (0.367).

ConclusionInter-observer reliability was better for TJCs than SJCs. Regarding SJCs agreement was better in large joints such as the knees compared to the small joints such as the MCPs. Disease global assessment may show discrepancy between patients and physicians.

Evaluar el acuerdo interobservador del recuento de articulaciones estándar entre el profesor experimentado y el candidato joven, y comparar la evaluación global de la enfermedad entre el profesor, el candidato joven y los pacientes.

MétodosEste estudio incluyó a 100 pacientes con artritis reumatoide. Para todos los pacientes, la evaluación clínica independiente fue realizada por 2 reumatólogos (profesor y candidato) para la detección de la sensibilidad en 28 articulaciones y la hinchazón en 26 articulaciones. También en este estudio evaluaciones globales de la actividad de la enfermedad por el proveedor (prof y candidato) Se realizó el paciente (PGA) para cada paciente. El EGA se determinó sin conocimiento previo de los resultados de la prueba de laboratorio del paciente.

ResultadosSe encontró una concordancia altamente significativa (correlación) entre el profesor y el candidato tanto en el número de articulaciones sensibles (p <0.001) (r = 0.946), y el número de articulaciones inflamadas (p <0.001) (r = 0.797). En cuanto a las articulaciones inflamadas, el mayor acuerdo fue en la rodilla derecha (0,929), mientras que el acuerdo pobre fue encontrado en el 5to MCP derecho (0.049). En cuanto a las articulaciones sensibles, la mayor analogía fue en el codo derecho (0,899), encontraste con el tercer PIP izquierdo (0.462) que mostró la menor congruencia. El estudio del acuerdo utilizando la medición kappa para la evaluación global de la enfermedad mostró: Acuerdo moderado (entre profesor y candidato) (0,405), Acuerdo justo entre (profesor y paciente) (0.213), Acuerdo justo entre (candidato y paciente) (0,367).

ConclusiónLa con fiabilidad entre observadores fue mejor para TJCs que SJCs. Con respecto a los SJC, el acuerdo fue mejor en las articulaciones grandes, como las rodillas en comparación con las articulaciones pequeñas, como los MCP. La evaluación global de la enfermedad puede mostrar discrepancias entre los pacientes y los médicos.

Rheumatoid arthritis (RA) is the most common chronic inflammatory joint disease.1 RA is characterized by chronic inflammation, synovial proliferation and excessive proinflammatory cytokine production, leading to cartilage and bone destruction.2 It is clear that early diagnosis, referral, and treatment of patients with RA results in improvement in clinical signs and prevention of joint destruction.3

Achievement of low disease activity or even remission is an attainable target in RA.4 Accurate assessment of disease activity and joint damage in RA is important in rheumatological practice to enable therapeutic decisions and to evaluate disease outcome and response to treatment.5,6 Joint examination is a prerequisite to the diagnosis of RA, and quantitative counts of swollen and tender joints remain the most specific tools for patient assessment.7 Disease severity is directly related to the number of swollen and tender joints. Consequently, research and clinical trials on the use of disease-modifying anti-rheumatic drugs and biologicals depend on reductions in the number of swollen and tender joints as key outcome measures.8

Joint counts are included in most indices of disease activity,9 and rheumatologists should include a joint count assessment for each RA patient at every visit.8 Although joint counts are crucial for the assessment of RA, their reliability however is an issue.10 Several studies11–14 reported considerable variations in joint counts between both individual observers and centres in routine clinical practice and even in clinical trials. Despite this, determining the number of swollen and tender joints is the most stable method of clinical assessment. This may explain why training to standardize methods,15 increases the sensitivity of counting swollen and tender joints and reduces the variability of measurement, though it does not entirely abolish it.8

Patient global assessment (PGA) is one of the most widely used patient-reported outcomes in RA practice and is included in several composite scores for RA assessment. PGA is often assessed by a single question with a 0–10 or 0–100 scale.16 However, in RA, assessment of disease activity may differ between patients and physicians.17 Such discordance between patients and clinicians in their evaluation of RA activity has been widely noted.18,19 Most often, patients rate their RA as more active than clinicians. This difference may raise questions about the validity of these measures, and about whose assessment should be used to guide treatment decisions.20

Thus the aim of the current study is to evaluate inter-observer agreement between professor and candidate concerning swollen and tender joint counts, and to determine the joints that show low inter-observer agreement. Another goal was to compare provider global assessment (EGA) performed by the professor versus EGA done by the candidate on one hand, and the provider global assessment (EGA) to the patients global assessment (PGA) on the other hand.

Patients and methodsThis study included one hundred rheumatoid arthritis (RA) patients who fulfilled the ACR/EULAR 2010 Classification Criteria for RA.21 Patients were attending the Rheumatology and Rehabilitation Department of the Kasr El-Aini Hospitals & Specialized Manial Hospital, Cairo University. Patients’ ages ranged from 24 to 75 years with a mean of 45.12 years, mean disease duration was 8.14±6.84 years. Our patients were 6 males and 94 females who were consecutively collected over a period of 7 months starting from May 2014 until November 2014. All patients included in the study were subjected to full history taking, thorough clinical examination, including local joint examination.

Local joint evaluation was done by two rheumatologists who carried out a consensus on joint assessment before the study: the first, a skilled rheumatology professor (Prof) widely experienced in joint evaluation, with more than thirty years experience in University hospitals, and the second, a young Rheumatology fellow (candidate) who has been trained by different rheumatology professors and has performed more than 500 supervised joint count examinations in about three years training in a University hospital.

Clinical evaluation was performed independently and sequentially by the two rheumatologists in the same day for detection of tenderness in 28 joints and swelling in 26 joints. Patients evaluation was done by the candidate without being informed of the results of the professor's evaluation.

The following 26 joints were assessed bilaterally for tenderness and swelling: [10 metacarpophalangeal (MCPs), 10 proximal interphalangeal (PIPs) joints, both elbows, both wrists and both knees], while both shoulder joints were assessed for tenderness only. Clinical inter-observer agreement for both tenderness and swelling was calculated.

The sum of the tender joint count (TJC) as well as the swollen joint count (SJC) was recorded for each patient. Correlation was done regarding the total number of swollen, tender joints in each patient comparing the results of the professor to those of the candidate using spearman‘s rho test.

Also in this study, global assessment of disease activity for each patient was performed by both the provider (professor and candidate) (EGA) on one hand, and the patient (PGA) on the other hand. The EGA was determined without previous knowledge of the patient's laboratory test results. The question for determining the PGA was, “How do you estimate your disease activity today”. The assessment was done using a 0–100 scale.22 CDAI was used to assess disease activity by professor and candidate.23

Statistical methodsData management and statistical analysis were performed using Statistical Package for Social Sciences version 21 (SPSS Inc, Chicago, IL).

Numerical data were summarized using mean and standard deviation or median and range. Categorical data was summarized as percentages. Correlations were determined by using Spearman's rho test. As a measure of reliability, Cohen's kappa was used to assess the degree of agreement between professor and candidate. All p-values are two-sided. p-values<0.05 were considered significant.

CorrelationIn statistics, the correlation coefficient r measures the strength and direction of a linear relationship between two variables on a scatter plot. The value of r is always between +1 and −1 (with +1 being exactly perfect uphill (positive) linear relationship and −1 which is exactly a perfect downhill (negative) linear relationship).

The magnitude of agreement by Kappa coefficient is graded/evaluated as follows: Almost perfect 0.8–1, substantial 0.6–0.8, moderate 0.4–0.6, fair 0.2–0.4, Poor 0–0.2, and no agreement for values less than zero.

ResultsConcerning tender and swollen joint examinationWe compared the results of the examination performed by the professor versus that performed by the candidate regarding tenderness in 28 joints and swelling in 26 joints. The degree of agreement was measured by Cohen's kappa. (of 28 joints for tenderness and 26 joints for swelling between the professor and the candidate in all patients involved in this study and we measured the degree of agreement by Kappa measurement.)

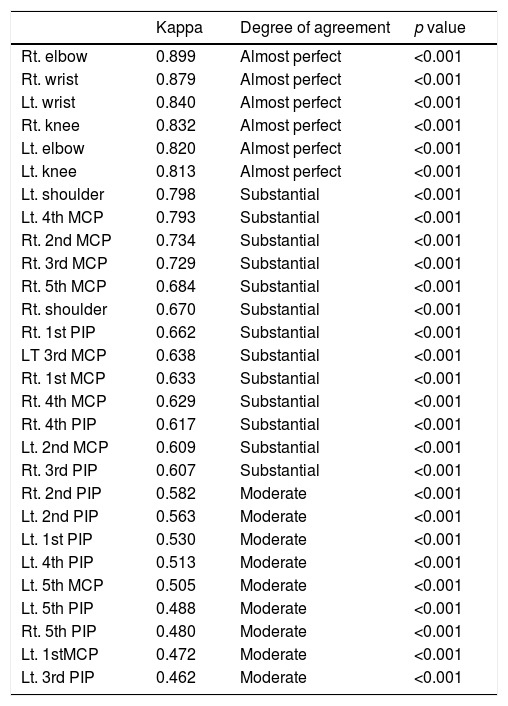

Regarding tender joints, the highest agreement was in the right elbow (0.899), while the lowest agreement was in the left 3rd PIP (0.462) as shown in Table 1.

Tender joints from highest to lowest degree of agreement.

| Kappa | Degree of agreement | p value | |

|---|---|---|---|

| Rt. elbow | 0.899 | Almost perfect | <0.001 |

| Rt. wrist | 0.879 | Almost perfect | <0.001 |

| Lt. wrist | 0.840 | Almost perfect | <0.001 |

| Rt. knee | 0.832 | Almost perfect | <0.001 |

| Lt. elbow | 0.820 | Almost perfect | <0.001 |

| Lt. knee | 0.813 | Almost perfect | <0.001 |

| Lt. shoulder | 0.798 | Substantial | <0.001 |

| Lt. 4th MCP | 0.793 | Substantial | <0.001 |

| Rt. 2nd MCP | 0.734 | Substantial | <0.001 |

| Rt. 3rd MCP | 0.729 | Substantial | <0.001 |

| Rt. 5th MCP | 0.684 | Substantial | <0.001 |

| Rt. shoulder | 0.670 | Substantial | <0.001 |

| Rt. 1st PIP | 0.662 | Substantial | <0.001 |

| LT 3rd MCP | 0.638 | Substantial | <0.001 |

| Rt. 1st MCP | 0.633 | Substantial | <0.001 |

| Rt. 4th MCP | 0.629 | Substantial | <0.001 |

| Rt. 4th PIP | 0.617 | Substantial | <0.001 |

| Lt. 2nd MCP | 0.609 | Substantial | <0.001 |

| Rt. 3rd PIP | 0.607 | Substantial | <0.001 |

| Rt. 2nd PIP | 0.582 | Moderate | <0.001 |

| Lt. 2nd PIP | 0.563 | Moderate | <0.001 |

| Lt. 1st PIP | 0.530 | Moderate | <0.001 |

| Lt. 4th PIP | 0.513 | Moderate | <0.001 |

| Lt. 5th MCP | 0.505 | Moderate | <0.001 |

| Lt. 5th PIP | 0.488 | Moderate | <0.001 |

| Rt. 5th PIP | 0.480 | Moderate | <0.001 |

| Lt. 1stMCP | 0.472 | Moderate | <0.001 |

| Lt. 3rd PIP | 0.462 | Moderate | <0.001 |

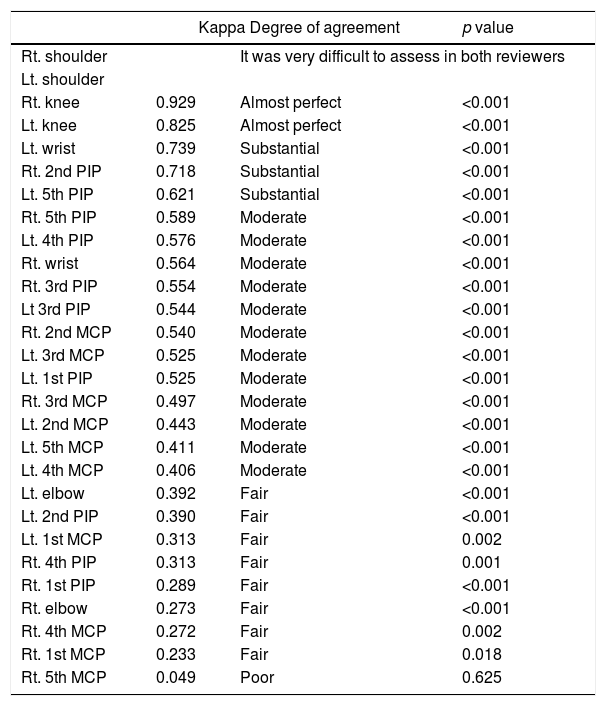

Regarding swollen joints, the highest accordance was in the right knee (0.929), while there was no agreement in the right 5th MCP (0.049) as shown in Table 2.

Swollen joints from highest to lowest degree of agreement.

| Kappa Degree of agreement | p value | ||

|---|---|---|---|

| Rt. shoulder | It was very difficult to assess in both reviewers | ||

| Lt. shoulder | |||

| Rt. knee | 0.929 | Almost perfect | <0.001 |

| Lt. knee | 0.825 | Almost perfect | <0.001 |

| Lt. wrist | 0.739 | Substantial | <0.001 |

| Rt. 2nd PIP | 0.718 | Substantial | <0.001 |

| Lt. 5th PIP | 0.621 | Substantial | <0.001 |

| Rt. 5th PIP | 0.589 | Moderate | <0.001 |

| Lt. 4th PIP | 0.576 | Moderate | <0.001 |

| Rt. wrist | 0.564 | Moderate | <0.001 |

| Rt. 3rd PIP | 0.554 | Moderate | <0.001 |

| Lt 3rd PIP | 0.544 | Moderate | <0.001 |

| Rt. 2nd MCP | 0.540 | Moderate | <0.001 |

| Lt. 3rd MCP | 0.525 | Moderate | <0.001 |

| Lt. 1st PIP | 0.525 | Moderate | <0.001 |

| Rt. 3rd MCP | 0.497 | Moderate | <0.001 |

| Lt. 2nd MCP | 0.443 | Moderate | <0.001 |

| Lt. 5th MCP | 0.411 | Moderate | <0.001 |

| Lt. 4th MCP | 0.406 | Moderate | <0.001 |

| Lt. elbow | 0.392 | Fair | <0.001 |

| Lt. 2nd PIP | 0.390 | Fair | <0.001 |

| Lt. 1st MCP | 0.313 | Fair | 0.002 |

| Rt. 4th PIP | 0.313 | Fair | 0.001 |

| Rt. 1st PIP | 0.289 | Fair | <0.001 |

| Rt. elbow | 0.273 | Fair | <0.001 |

| Rt. 4th MCP | 0.272 | Fair | 0.002 |

| Rt. 1st MCP | 0.233 | Fair | 0.018 |

| Rt. 5th MCP | 0.049 | Poor | 0.625 |

Details of agreement regarding tender and swollen joints are shown in Tables 3–4 (in descending manner from highest to lowest agreement).

Correlation was done between the results of the prosessor and those of the candidate regarding the total number of swollen, tender joints in each patient using Spearman‘s rho test. The results showed highly significant correlation regarding tenderness (p<0.001), swollen joints (p<0.001); however the correlation was stronger regarding tender joints (r=0.946) compared to the swollen joints (r=0.797).

Disease global assessmentAccording to EGA of the professor: there were 18 patients with mild activity, 56 patients with moderate activity and 26 patients with severe activity.

According to EGA of candidate: there were 19 patients with mild activity, 50 patients with moderate activity and 31 patients with severe activity.

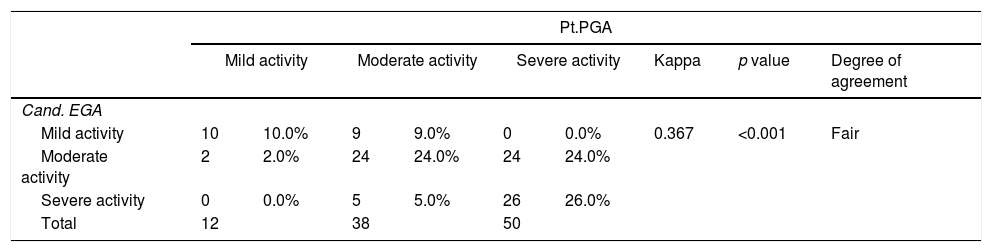

According to PGA of patient: there were 12 patients with mild activity, 38 patients with moderate activity and 50 patients with severe activity.

Agreement study using kappa measurement showed:

- •

Moderate agreement between (professor and candidate) (0.405)

- •

Fair agreement between (professor and patient) (0.213)

- •

Fair agreement between (candidate and patient) (0.367)

- •

Details are shown in the following Tables 3–5.

Highly significant correlation was found between (professor and candidate) (professor and patient) (candidate and patient); however the r value was highest in (professor and candidate) (r=0.766) and lowest in (professor and patient) (r=0.580) as shown in Table 6.

By using CDAI for assessment of disease activity we found:

- •

Remission was found in 2 patients by professor and in 1 patient by candidate.

- •

Mild activity was detected in 12 patients by professor and 11 patients by candidate.

- •

Moderate activity was determined in 28 patients by professor and 30 patients by candidate.

- •

Severe activity was observed in 58 patients by both the professor and the candidate.

Accordance in CDAI between professor and candidate using kappa measurement was substantial (0.754).

DiscussionAccurate assessment of disease activity in rheumatoid arthritis (RA) is crucial for establishing disease severity and monitoring response to treatment.24 Counting the number of swollen joints is a clinical method of quantifying the amount of inflamed synovial tissue.25 However, one of the most important limitations to the joint count is its poor reproducibility with a requirement to be performed by the same observer at each visit.7 Also discordance between patient and physician ratings of RA severity occurs in clinical practice and correlates with pain scores and measurements of joint disease.17

Thus in this study we tried to assess the inter-observer agreement of standard joint count between experienced professor and young candidate and to show in which joints inter-observer reproducibility could be of value, and to compare provider and patient global assessment between professor, young candidate and patients.

In this study Kappa measurement was used for evaluation of degree of agreement between professor and candidate regarding joint tenderness and swelling.

Regarding swollen joints, agreement ranged from (poor agreement as in the right 5th MCP (0.049) to almost perfect agreement as in the right knee (0.929)), however agreement was reported as (Fair – moderate) in the vast majority of the joints. It is to be noted that agreement was much better in large joints as knees than in small joints as MCPs.

Regarding tender joints, agreement ranged from (moderate as in left 3rd PIP (0.462)) to almost perfect as in right elbow (0.899). However, agreement was reported as (moderate-substantial) in the vast majority of the joints, and it is also to be noted that agreement was much better in large joints as elbows, wrists, and knees as compared to small joint such as MCPs and PIPs.

Salaffi et al.9 conducted a similar study to assess the inter-observer agreement of standard joint count and to compare clinical examination with grey scale ultrasonography (US). Their study was conducted on 44 patients with recent-onset RA (disease duration<2 years), and they reported fair to moderate inter-observer agreement on individual joint counts for either tenderness or joint swelling apart from the glenohumeral joint.

Results reported by Salaffi et al.9 were quite similar to our results. Nevertheless, inter-observer agreement was better in our study, which may be explained by the type of patients used in Salaffi's study (recent-onset RA) and may also be due to the lesser experience of the second examiner in Salaffi's study (who has examined only 300 joints before the study).

Regarding disease global assessment, by using kappa measurement we found, moderate agreement between (professor and candidate), while fair agreement was found between (candidate and patient), as well as (professor and patient). Such discrepancy between patients and physicians in their perceptions of rheumatoid arthritis disease activity was reported by Studenic et al.26 in their study conducted on 646 rheumatoid arthritis patients. Also Khan et al.27 found that nearly 36% of patients had discordance in RA activity assessment with their physicians. This may be explained by the fact that (when we know that) pain was overwhelmingly the single most important determinant of patient global assessment, followed by fatigue. In contrast, physician global assessment was most influenced by swollen joint count (SJC), followed by erythrocyte sedimentation rate (ESR).27 Thus it is commonly reported that in RA, physicians tend to rate disease activity lower than patients; however, scoring differences in both directions have been reported.17,28 Similarly Hernández-Cruz and Cardiel29 reported that the reliability of most of the outcome measures for RA was good, especially for those measurements evaluated by a rheumatologist, while those requiring patient participation need to be improved.

Another study done24 to evaluate the degree of patient – physician discordance in assessment of global disease severity in RA, showed that nearly one third of RA patients differed from their physicians by a meaningful degree in assessment of global disease severity, and they found that discordance was much higher in patients with depressive symptoms. Gvozdenovic et al.30 found differences between patient global disease activity (PtGDA) and physician global disease activity (PhGDA) which vary from one country to another and attributed this to the culture and level of education of the each country.

Nikiphorou et al.16 reported that the limitations in the use of PGA in RA may be due to the several possible ways of measuring PGA, including the intended assessment or underlying concept (i.e., global health versus disease activity) and variations in wording/phrasing may lead to differences in interpretation of PGA.

Finally, we can say that, although in our study inter-observer agreement for swollen joints ranged from fair to moderate, further training, standardization and specification may be required to improve inter-observer agreement, especially with availability of multiple treatment options and multiple biological treatment now days, as the use of such treatment, depend mainly on clinical evaluation and patients disease activity. Also attention should be paid to improve disease global assessment, especially the patients’ version (PGA), to improve assessment of RA patients and therefore treatment decisions.

Conflict of interestNone declared.